Introduction

Synthetic Intelligence (SI) is often perceived as the next frontier of technological and cognitive advancement, yet its development is deeply rooted in decades of research, experimentation, and philosophical inquiry. Unlike traditional Artificial Intelligence (AI), which focuses primarily on simulating intelligent behavior, SI aims to replicate human-like cognition, integrating reasoning, adaptability, creativity, and learning in ways that closely mirror human thought.

Understanding the history and evolution of SI provides essential context for appreciating its potential and the challenges it faces today. This exploration traces the early conceptual roots of machine intelligence, examines key milestones in research, and highlights the ongoing transition from AI models to modern SI systems.

Early Roots of Machine Intelligence

The conceptual foundations of Synthetic Intelligence stretch back over a century, drawing inspiration from mathematics, logic, philosophy, and early computer science.

1. Philosophical Foundations

The seeds of SI can be traced to philosophical inquiries about the nature of intelligence and thought. Early philosophers such as René Descartes and Leibniz explored the idea that reasoning could follow formal rules and patterns, suggesting the theoretical possibility of mechanized cognition.

-

Leibniz proposed a universal calculus, a symbolic system through which reasoning could be reduced to calculation.

-

Alan Turing, in the mid-20th century, laid the groundwork for modern computing and artificial intelligence with his conceptualization of the Turing Machine in 1936, showing that machines could theoretically perform any calculable task.

These philosophical and mathematical explorations provided the intellectual framework for imagining machines capable of intelligent thought.

2. Early Computational Experiments

The mid-20th century marked the first practical attempts to build machines that could replicate aspects of human intelligence:

-

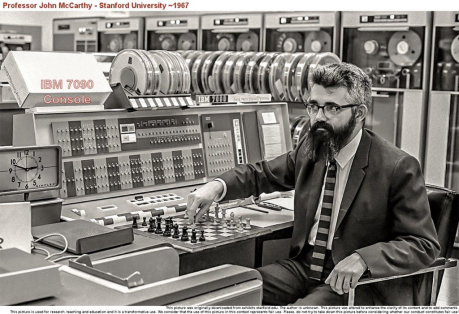

1950s – The Dartmouth Conference: Often considered the birthplace of AI as a formal discipline, the Dartmouth Summer Research Project on Artificial Intelligence (1956) brought together researchers to explore “thinking machines.”

-

Logic Theorist (1955): Created by Allen Newell and Herbert A. Simon, this program could prove mathematical theorems and is regarded as one of the first artificial intelligence programs.

-

Early Neural Networks (1950s–1960s): Models such as perceptrons were designed to simulate neuron-based computation in the human brain, an initial attempt to mimic cognitive processes.

While these early systems were primitive compared to today’s technology, they represented the first concrete steps toward creating machines capable of reasoning and learning, which would later inform Synthetic Intelligence.

Key Milestones in SI Research

The journey from theoretical ideas to modern SI has been shaped by numerous milestones in computing, neuroscience, and cognitive science.

1. Cognitive Science and AI Foundations (1960s–1970s)

During this period, researchers began to study human cognition systematically:

-

Cognitive architectures like SOAR and ACT-R emerged to model human problem-solving. These architectures aimed to replicate memory, learning, and reasoning in a structured system.

-

Expert Systems: Programs like MYCIN demonstrated that machines could simulate specialized human reasoning in medical diagnostics. These efforts, though limited to specific domains, provided the blueprint for more general cognitive models.

2. Neural Networks and Connectionism (1980s–1990s)

The rediscovery of neural networks led to significant advancements:

-

Backpropagation algorithm: Allowed multi-layered neural networks to learn complex patterns.

-

These models attempted to mirror the human brain’s interconnected neurons, marking the first large-scale attempt at simulating cognitive processes computationally.

3. Symbolic vs. Subsymbolic Debate

A crucial development in the history of SI research was the debate between symbolic AI (logic-based, rule-driven reasoning) and connectionist AI (neural network-based, pattern recognition).

-

Symbolic AI excelled in reasoning with explicit rules but struggled with ambiguity.

-

Connectionist approaches excelled in learning from experience but lacked explicit reasoning capabilities.

Synthetic Intelligence researchers recognized that combining these approaches—neural-symbolic systems—might be the key to achieving human-like cognition.

4. Emergence of Machine Learning and Deep Learning (2000s–2010s)

The explosion of data and computational power led to more sophisticated AI, which indirectly fueled SI research:

-

Deep Learning: Advanced neural networks capable of processing massive datasets allowed machines to “learn” patterns similar to human experience accumulation.

-

Reinforcement Learning: Enabled machines to learn from trial and error, a method akin to human experiential learning.

-

These developments bridged some gaps between task-specific AI and the cognitive ambition of SI.

5. Early SI-Specific Models (2010s–Present)

While AI models focused on task performance, SI research began emphasizing cognitive replication:

-

Cognitive Architectures for SI: Advanced models like CLARION and LIDA aim to simulate human learning, decision-making, and consciousness-like processes.

-

Embodied Cognition Research: Robots and agents interacting with real-world environments provided insight into how intelligence emerges from perception and action, not just abstract computation.

-

Neural-Symbolic Integration: Efforts to combine reasoning with learning continue to define modern SI development.

Transition from AI Models to SI Models

The distinction between AI and SI is subtle but important. While AI focuses on outcomes—producing intelligent behavior—SI emphasizes underlying cognitive processes, aiming to replicate the mechanics of thought itself.

1. Limitations of AI Models

Traditional AI systems, even deep learning models, are often narrow and task-specific:

-

They excel in areas like image recognition, speech translation, or game-playing.

-

They struggle with generalization, creativity, and reasoning in novel contexts.

These limitations prompted researchers to look beyond AI, seeking systems that can think, adapt, and reason holistically—the hallmark of Synthetic Intelligence.

2. Principles Guiding SI Models

Modern SI systems are built on principles distinct from conventional AI:

-

Human-like Reasoning: Incorporating analogy, intuition, and abstraction.

-

Adaptive Learning: Moving beyond data memorization to context-sensitive learning.

-

Integration of Symbolic and Subsymbolic Processing: Balancing logic-based reasoning with pattern recognition.

-

Embodiment: Leveraging physical or simulated environments to develop emergent intelligence.

3. Examples of SI Research in Action

-

Cognitive Robotics: Robots designed to understand, learn, and adapt in complex, real-world environments.

-

Artificial General Intelligence (AGI) Experiments: Projects aiming for systems that can perform any intellectual task a human can, inspired by SI principles.

-

Human-Machine Collaboration Tools: Platforms that mimic human reasoning to support complex decision-making.

This transition from AI to SI represents a paradigm shift: from building tools that appear intelligent to constructing systems that possess intelligence in a synthetic but human-like form.

Challenges in the Evolution of SI

While progress has been significant, Synthetic Intelligence faces several key challenges:

-

Complexity of Human Cognition: Human thought involves consciousness, emotion, and intuition—factors difficult to model synthetically.

-

Ethical and Philosophical Concerns: SI raises questions about autonomy, responsibility, and potential rights for intelligent systems.

-

Computational and Resource Constraints: High-fidelity SI models require enormous computational power and sophisticated architectures.

-

Validation and Testing: Determining whether a system truly exhibits intelligence, rather than mimicking it, remains a philosophical and practical challenge.

Conclusion

The history and evolution of Synthetic Intelligence is a story of curiosity, imagination, and relentless innovation. From the philosophical musings of early thinkers to the computational experiments of the 20th century, and finally to the cutting-edge cognitive architectures of today, SI represents the aspiration to bridge the gap between machine behavior and human thought.

While AI continues to transform industries through automation and predictive modeling, SI promises a more profound leap: machines that think, reason, and learn like humans, potentially transforming healthcare, defense, robotics, education, and countless other fields.

As research advances, the transition from AI to SI reflects humanity’s enduring quest to understand intelligence itself—not just to replicate its outputs but to synthesize its essence. By studying the history and evolution of SI, we gain insight not only into technology but into the very nature of human cognition and the future of intelligent systems.